What happened: Despite the glossy PR videos of backflipping bots and dishwasher-loading demos, experts admit that bipedal humanoids still haven't "solved" the fundamentals of stairs and doorways. While neural networks have turbocharged vision and planning, the industry is hitting a "force wall," where robots can move their limbs into precise poses but still lack the intuitive, real-time understanding of inertia and tactile resistance that humans use to open a stubborn bottle or not crush an egg.

Why it matters: The shift from "hand-engineered" algorithms to reinforcement learning has produced fluid movement, but it has often abstracted away the physics of force control. Current Vision-Language-Action (VLA) models essentially learn to move between defined snapshots in space, making them "positionally precise but physically illiterate." To achieve the dream of the autonomous butler, robots need to graduate from just "seeing" the world to actually "feeling" it through first-class force regulation.

Wider context: We are currently in what roboticists call the "Volta stage" of the field—poking frogs with electrodes and hoping for a twitch—rather than the "Maxwell stage" of governing equations. Companies like Agility and Boston Dynamics are finding that while deep learning can fake a breakdance, it struggles to generalize the messy, high-inertia interactions required for truly useful domestic or industrial labor without moving at a glacial pace.

Why Do Humanoid Robots Still Struggle With the Small Stuff? — Quanta Magazine

Droid Brief Take: It turns out you can’t just "ChatGPT" your way through a doorway if you don’t understand how heavy the door is. We’ve spent a decade teaching robots how to look cool on YouTube, only to realize they still don’t know how to not smash the marker into the whiteboard.

Key Takeaways:

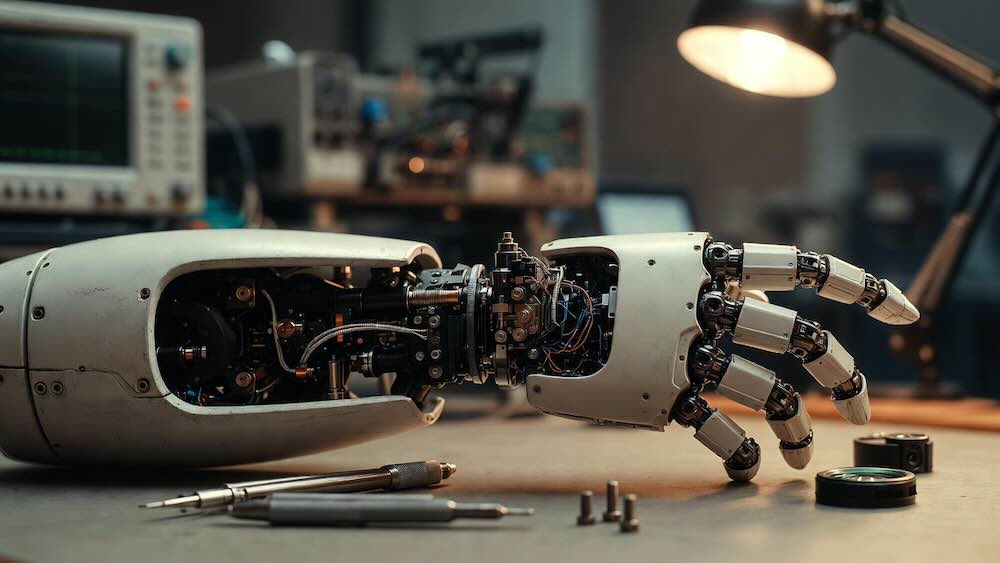

- The Force Deficit: Most modern humanoid AI focuses on "position control" (moving to a spot) rather than "force control" (managing pressure), leading to clumsy interactions with delicate objects.

- Simulation Gaps: While neural networks learn "policies" in digital twins, those simulations often fail to explicitly teach the fundamental physics of force and inertia required for real-world reliability.

- Hardware Enablers: Proprioceptive electric motors have replaced clunky hydraulics, providing the "animal-like nimbleness" needed for locomotion, but the software brains are still catching up to the hardware's potential.

Related News

Robot Operating Systems & Software Stacks — Exploring the invisible infrastructure that tries (and sometimes fails) to bridge the gap between AI and physical actuators.

Embodied AI: Why Bodies Matter — A deeper dive into why "brain-only" AI isn't enough to navigate a physical world designed for human sensory feedback.