Your hands are extraordinary. Each one contains 27 bones, 34 muscles, and over 17,000 touch receptors — all working in concert to perform thousands of tasks every day without conscious thought. You thread needles, crack eggs, tie shoelaces, and catch thrown objects using a biological system refined over millions of years of evolution.

Now try to build a machine that does the same thing.

This is the central challenge of robotic manipulation, and it's arguably the single biggest obstacle standing between today's humanoid robots and genuine real-world usefulness. A robot that can walk beautifully but can't reliably pick up a coffee cup has limited practical value. Getting hands right is what will ultimately determine which humanoid platforms succeed — and which remain expensive curiosities.

Why Hands Are the Hardest Problem in Humanoid Robotics

Bipedal walking gets the headlines. Backflips make the viral videos. But in the engineering labs where humanoid robots are actually being built, hands consume a disproportionate share of attention, funding, and frustration.

The reason is contact complexity. When a robot walks, its feet interact with a single surface — usually a flat floor. Each foot has one or two contact points at any given moment. A hand manipulating an object might have dozens of simultaneous contact points, each experiencing different forces, friction coefficients, and deformations. The physics of multi-finger grasping involves far more variables than bipedal locomotion, and those variables change continuously as an object shifts, rotates, or deforms in the grip.

Then there's the sheer range of what hands need to do. The same hand that lifts a 5kg dumbbell must also thread a cable through a narrow slot, turn a key in a lock, and handle a ripe tomato without crushing it. No other part of a humanoid robot faces such extreme variation in task requirements.

Grippers vs. Anthropomorphic Hands: Two Philosophies

Not every robot needs human-shaped hands. The robotics industry has long relied on grippers — simpler end effectors designed for specific tasks — and they remain dominant in industrial automation for good reason.

Industrial Grippers

The simplest grippers are parallel-jaw designs: two flat surfaces that close together to pinch an object. They're cheap, reliable, and effective for structured environments where the objects are predictable. Vacuum grippers use suction to lift flat or smooth items. Soft grippers made from compliant materials like silicone can conform around irregular shapes. These devices typically have one to three degrees of freedom — just enough to open, close, and perhaps rotate.

For a factory picking identical boxes off a conveyor belt, a simple gripper is the right tool. But for a humanoid robot entering a human home or an unstructured warehouse, the limitations become obvious quickly. A parallel-jaw gripper can't turn a door handle, operate a light switch, and fold a towel without being swapped out between tasks.

Anthropomorphic Hands

This is where human-shaped hands enter the picture. An anthropomorphic robotic hand attempts to replicate the structure and capability of the human hand — five fingers, an opposable thumb, and enough degrees of freedom to perform a wide range of grasps and manipulations.

The engineering rationale for the human form factor is straightforward: our entire built environment — every tool, handle, button, and container — was designed for human hands. Rather than redesigning the world for robots, it's more practical to give robots hands that can operate in the world as it already exists.

Modern anthropomorphic hands typically feature between 11 and 27 degrees of freedom. Tesla's Optimus Gen 2 hands have 11 degrees of freedom per hand, while the Gen 3 design — revealed in early 2026 — uses a tendon-driven system with 25 actuators per forearm and hand, representing a dramatic increase in dexterity. China's MATRIX-3 humanoid features a 27-degree-of-freedom hand that closely mirrors human anatomy. Figure's Figure 03, Agility's Digit, and Boston Dynamics' Atlas each take different approaches to the same fundamental challenge.

How Robot Hands Actually Work

Behind the external form of an anthropomorphic hand lies a set of interconnected mechanical and electronic systems. Here's what's inside.

Actuation: Making Fingers Move

There are two dominant approaches to powering robotic fingers, and the debate between them is one of the liveliest in the field.

Tendon-driven systems place the motors in the forearm and connect them to the fingers via cables or tendons — much like the human hand, where the muscles that control your fingers are actually located in your forearm, connected by long tendons that run through your wrist. This keeps the hand itself slim and lightweight, allowing for more natural proportions and faster movement. The downside is complexity: routing, tensioning, and maintaining dozens of thin cables is an ongoing engineering headache. Tesla's Optimus uses this approach, as does the open-source DexHand project and several Chinese platforms including MATRIX-3.

In-hand actuators embed small motors directly into the finger joints. This simplifies the mechanical linkage — no cables to route or tension — but makes the fingers bulkier and heavier. The added weight at the fingertips also increases the inertia the hand must overcome for quick, precise movements.

Linkage-driven systems offer a middle ground, using rigid mechanical linkages rather than cables to transmit force from motors to finger joints. Companies like Linkerbot offer both tendon-driven and linkage-driven options, allowing integrators to choose based on their application's requirements for stiffness, compliance, and maintenance.

A smaller but growing community is exploring artificial muscles — actuators made from electroactive polymers, shape-memory alloys, or pneumatic systems that contract and expand like biological muscle tissue. These offer the tantalising promise of soft, compliant, inherently safe actuation, but current designs lack the force, speed, and precision of motor-driven systems.

Sensing: Teaching Robots to Feel

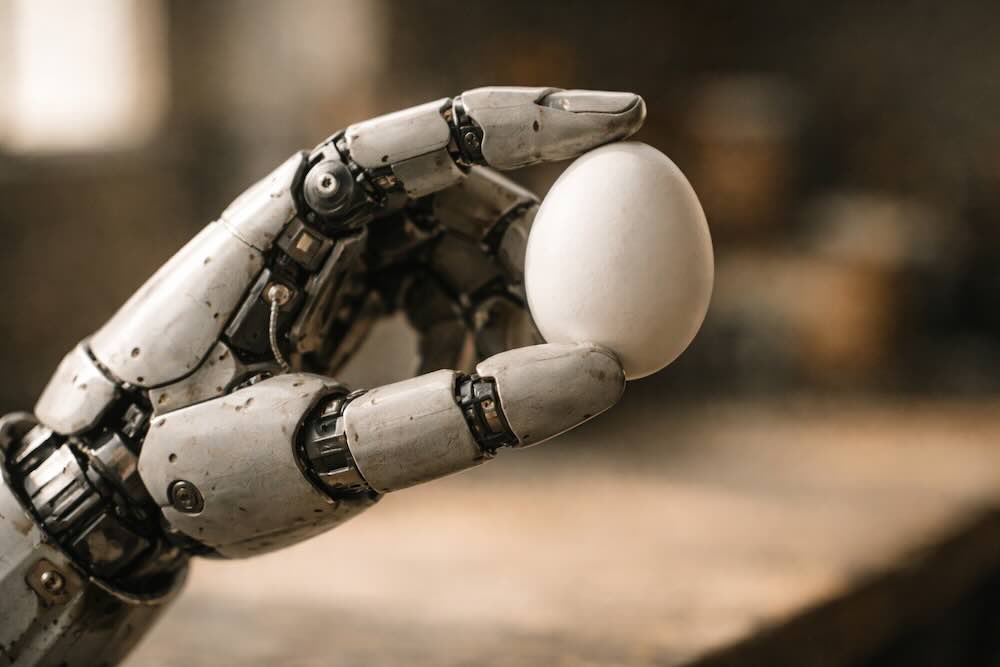

A hand without sensation is a hand that crushes wine glasses and drops eggs. Tactile sensing is what transforms a mechanical gripper into something approaching a useful manipulator.

The human fingertip packs around 2,500 touch receptors into a space smaller than a postage stamp. These receptors detect pressure, vibration, temperature, texture, and the precise geometry of contact — all simultaneously, all in real time. Replicating even a fraction of this capability in a robot is extraordinarily difficult.

Current robotic tactile sensors fall into several categories:

- Resistive sensors change their electrical resistance when compressed. They're simple and cheap, but offer limited spatial resolution and degrade over time.

- Capacitive sensors measure changes in electrical capacitance as surfaces deform under pressure. They offer better sensitivity and can detect both normal and shear forces.

- Piezoelectric sensors generate an electrical signal in response to mechanical stress. They're excellent at detecting dynamic events like vibration and slip, but less suited to measuring static pressure.

- Vision-based tactile sensors — such as the GelSight system developed at MIT — use a camera behind a soft, deformable membrane to capture high-resolution images of contact geometry. When an object presses into the membrane, the camera sees the resulting deformation in remarkable detail. These sensors achieve spatial resolution that rivals or exceeds human fingertips.

- Triaxial sensors represent a newer frontier, capable of simultaneously decoupling normal pressure from shear forces in real time — critical for detecting when an object is about to slip from a grasp.

The most advanced prototype systems are now achieving resolution that exceeds human touch. Researchers at Analog Devices have developed tactile sensor prototypes with spatial resolution five times higher than a human fingertip, integrating pressure, vibration, and temperature sensing into compact packages. The F-TAC Hand, published in Nature Machine Intelligence in 2025, demonstrated high-resolution tactile sensing across 70% of the hand's surface area — and showed in 600 real-world trials that full-hand tactile feedback significantly outperformed systems without it.

Tesla's Optimus integrates custom tactile sensors with metallic tendons in the fingertips, providing real-time feedback on grip force and contact geometry. An in-hand controller processes this sensory data to adjust grasp pressure on the fly — essential for tasks like handling eggs or sorting delicate battery cells.

The Slip Detection Problem

One of the most critical — and most underappreciated — challenges in robotic grasping is detecting and preventing slip. When you hold a glass of water, your hand unconsciously monitors micro-movements and adjusts grip force hundreds of times per second. You don't think about it. Your nervous system handles it automatically.

For robots, slip detection requires combining data from multiple tactile sensors, processing it through machine learning algorithms, and commanding a grip adjustment — all within milliseconds. Recent work has shown that neural network-based slip detection systems, trained on datasets of hundreds of thousands of grasp attempts across multiple grasp types, can achieve high accuracy. But bridging the gap between laboratory results and reliable real-world performance remains an active area of research.

Grasping Strategies: How Robots Pick Things Up

Roboticists classify grasps into taxonomies based on how the hand contacts the object. The two broadest categories are:

- Power grasps — where the object is held in the palm with all fingers wrapped around it, maximising contact area and grip strength. Think of how you hold a hammer or a jar you're trying to open.

- Precision grasps — where the object is held between the fingertips, sacrificing grip strength for fine control. Think of how you hold a pen or pick up a coin from a table.

Within these categories, researchers have identified dozens of distinct grasp types — pinch, tripod, lateral, spherical, cylindrical, and many more. A truly dexterous robot hand needs to transition fluidly between these grasp types depending on the object and the task.

Beyond grasping, there's in-hand manipulation — the ability to reorient or reposition an object within the hand without setting it down. When you rotate a pen between your fingers to use the other end, or adjust your grip on a screwdriver mid-turn, you're performing in-hand manipulation. This remains one of the most difficult capabilities to replicate in a robot, requiring precise coordination of multiple fingers with continuous tactile feedback.

The Role of AI in Manipulation

The mechanical hardware is only half the story. Even a perfectly designed hand is useless without the intelligence to control it.

Traditional robotic manipulation relied on explicit programming — engineers would specify exact joint angles, forces, and trajectories for each task. This worked in highly structured factory environments but fell apart in the face of any variation. Move the coffee cup two centimetres to the left, and the programme fails.

Modern approaches use machine learning to teach robots to grasp and manipulate through experience rather than explicit instruction. The main techniques include:

- Imitation learning — a human demonstrates a task (often via teleoperation), and the robot learns to replicate the movements. Tesla has been training Optimus this way, with human operators wearing motion-capture gloves to demonstrate manipulation tasks.

- Reinforcement learning — the robot tries actions repeatedly in simulation, receiving rewards for success and penalties for failure, gradually discovering effective strategies. This is how many of the most impressive dexterous manipulation demonstrations have been achieved.

- Sim-to-real transfer — training in simulated environments (using tools like NVIDIA's Isaac Sim or MuJoCo) and then transferring the learned behaviours to physical robots. This avoids the time and expense of learning on real hardware, but the "reality gap" between simulation and the physical world remains a significant challenge — especially for hands, where contact physics are far more complex than for locomotion.

- Foundation models — large language models and vision-language models are increasingly being used to provide high-level task understanding. A robot might use a foundation model to interpret the instruction "make me a cup of tea" and break it down into a sequence of manipulation subtasks, while lower-level controllers handle the actual joint movements.

One of the persistent challenges is that hand simulation lags behind the rest of robotics simulation. As Rob Knight, a veteran robot hand designer, has noted, tools like Isaac Sim were built primarily for large robots on flat surfaces and still treat hands as an afterthought. The rich, multi-point contact physics that hands require are much harder to simulate accurately than foot-ground interactions.

Where We Are Today

The current state of humanoid robot hands is a study in rapid but uneven progress.

On the hardware side, the capabilities are genuinely impressive. Modern anthropomorphic hands can pick up eggs without cracking them, fold clothing (slowly), sort small components, and use basic tools. The mechanical designs — especially tendon-driven systems — have reached a level of sophistication where, as Knight puts it, the mechanical side is largely solvable. Companies like Linkerbot are now offering commercial dexterous hands with over 20 degrees of freedom and multi-modal tactile sensing at price points accessible to researchers.

On the control side, significant gaps remain. Most demonstrations of humanoid robots performing impressive manipulation tasks still involve some degree of teleoperation — a human operator guiding the robot's movements remotely, with the robot's AI handling lower-level motor control. Fully autonomous dexterous manipulation in unstructured environments — the kind of effortless object handling humans take for granted — is not yet reliably achieved by any platform.

The honest assessment, shared by many in the industry, is that dexterous hands are currently the primary bottleneck preventing humanoid robots from achieving broad real-world utility. The legs work. The perception systems work. The AI reasoning is improving rapidly. But the ability to reliably interact with the physical world through skilled hand manipulation is what will ultimately determine the pace of humanoid robot deployment.

What Comes Next

Several converging trends suggest that manipulation capability is about to accelerate:

- Better tactile sensing at lower cost. Advances in MEMS fabrication, flexible electronics, and vision-based tactile sensors are driving down the cost and increasing the resolution of robotic touch. The challenge of making sensors that are simultaneously sensitive, durable, and affordable is being attacked from multiple directions.

- Improved simulation tools. Dedicated hand simulation environments are beginning to emerge, closing the gap between where locomotion simulation was five years ago and where hand simulation is today.

- Scale in training data. As more humanoid robots are deployed — even in limited pilot programmes — the volume of real-world manipulation data available for training AI systems grows. Tesla's strategy of deploying Optimus in its own factories, even for basic tasks, is partly motivated by this data-collection imperative.

- Component ecosystem maturation. A growing supply chain of specialised dexterous hand manufacturers, tactile sensor companies, and actuator suppliers is making it easier and cheaper for humanoid robot companies to integrate high-quality hand hardware without building everything from scratch.

- AI-driven grasp planning. Foundation models are beginning to enable robots to plan grasps for novel objects they've never encountered — moving beyond the need to train on every specific item.

The trajectory is clear, even if the timeline is uncertain. Once robust dexterous manipulation is solved — once a humanoid robot can reliably interact with tools, objects, and environments the way a human worker can — the economic case for deployment transforms from speculative to compelling. Dexterous hands won't just be a component of humanoid robots. They'll be the component that unlocks the entire industry.

Key Terms

- Degrees of freedom (DoF) — the number of independent movements a joint or system can make. More DoF generally means more dexterity, but also more complexity to control.

- End effector — the device at the end of a robotic arm that interacts with the environment. Grippers and hands are both types of end effector.

- Tendon-driven — an actuation method where motors pull cables (tendons) to move joints, mimicking how the human hand is controlled by muscles in the forearm.

- In-hand manipulation — the ability to reposition or reorient an object using only the fingers, without setting it down.

- Sim-to-real transfer — training a robot's AI in a computer simulation and then deploying the learned behaviour on a physical robot.

- Tactile sensing — the ability to detect and measure physical contact, pressure, texture, slip, and temperature through touch.

- Grasp taxonomy — a classification system for the different ways a hand can hold an object, from power grasps to precision pinches.

- Teleoperation — remote control of a robot by a human operator, often used to demonstrate tasks that the robot then learns to perform autonomously.

This article is part of Droid Brief's How Humanoid Robots Work series. For more on the systems that make humanoid robots tick, explore our guides to Actuators & Motors, Sensors & Perception, and AI & The Robot Brain.