A humanoid robot is, at its core, a distributed computing system that happens to walk on two legs. Dozens of motors, hundreds of sensors, multiple cameras, and one or more onboard computers all need to exchange data with split-second precision — while simultaneously staying connected to human operators, cloud-based AI services, and potentially other robots sharing the same workspace.

Communication in a humanoid robot operates on two fundamentally different scales. Internally, data must flow between components inside the robot's own body at blistering speed. Externally, the robot needs wireless or wired links to the outside world. Getting both right is one of the less glamorous but absolutely critical engineering challenges in humanoid robotics.

This article breaks down how these communication systems work, why they matter, and where the technology is heading.

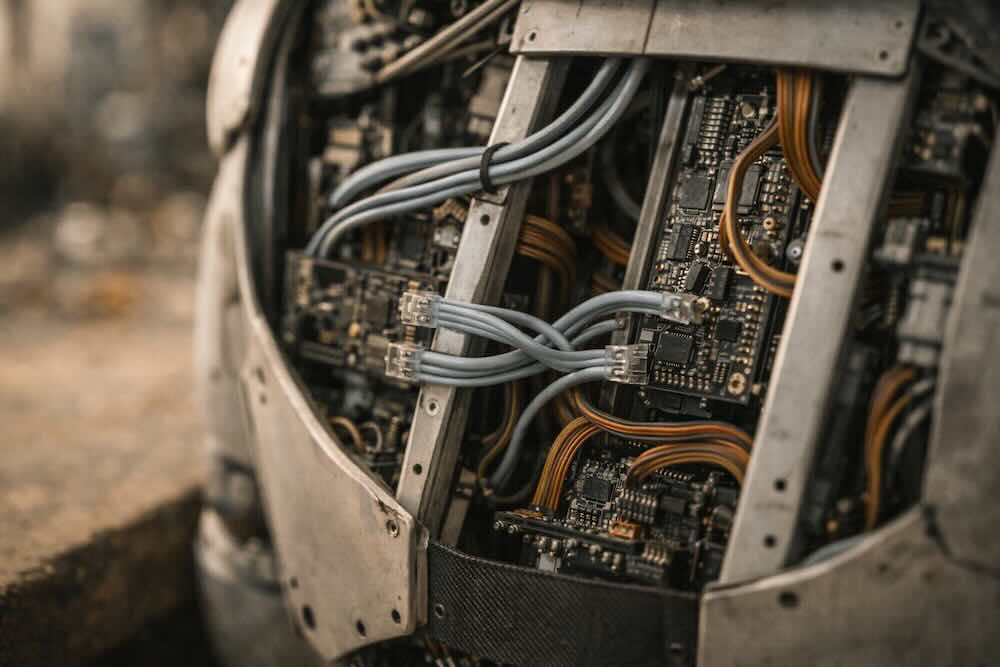

The Internal Nervous System

Think of a humanoid robot's internal communication network as its nervous system. Every joint actuator, every sensor, every fingertip pressure pad needs to report its state and receive commands many hundreds of times per second. A robot with 40 or more degrees of freedom — not unusual for a modern humanoid — generates an enormous volume of real-time data that must be coordinated without any perceptible delay.

Fieldbus Protocols: The Backbone

The workhorse technology for internal robot communication is the fieldbus — a standardised digital communication protocol that connects distributed sensors and actuators to a central controller. Several protocols are in common use, but one has come to dominate modern humanoid design.

EtherCAT (Ethernet for Control Automation Technology) has emerged as the leading internal communication standard for humanoid robots. Based on standard Ethernet hardware, EtherCAT achieves remarkably low latency by using a clever "processing on the fly" architecture: data packets pass through each device on the bus in sequence, with each node inserting or extracting its own data as the packet streams past. This means the master controller can communicate with every joint and sensor in the robot in a single network cycle, often completing the entire round trip in well under a millisecond.

This matters enormously for balance and locomotion. When a humanoid robot is walking, its control system needs to read every joint position and force sensor, compute the next set of motor commands, and send those commands back out — all within a few milliseconds. Any delay or inconsistency in this loop can cause the robot to stumble or fall. EtherCAT's deterministic timing makes it well suited to this challenge, which is why platforms including Boston Dynamics' Atlas, the TALOS research humanoid, and the TOCABI 33-degree-of-freedom robot all use EtherCAT-based control architectures.

Before EtherCAT's rise, many humanoid robots relied on older protocols like CAN bus (Controller Area Network), borrowed from the automotive industry, or even direct wired connections between every sensor and a central computer. CAN bus is still found in simpler or smaller humanoids — it's robust, well-understood, and resistant to electrical noise — but its limited bandwidth becomes a bottleneck as the number of joints increases. Some robots also use RS-485 serial communication or USB for certain subsystems, though these are increasingly seen as legacy approaches for high-performance platforms.

Why Speed Matters

To put the timing challenge in perspective: human reflexes operate on a timescale of roughly 20–50 milliseconds for simple spinal cord responses. A humanoid robot's internal control loop typically needs to run at 1 kHz (one cycle per millisecond) or faster to maintain stable bipedal walking. Some advanced platforms push this to 5 kHz or beyond for particularly dynamic movements. Every component in the communication chain — the physical wiring, the protocol overhead, the processing time at each node — contributes to the total latency, and keeping that total below the control cycle time is non-negotiable.

The Robot's Software Backbone: ROS 2 and Middleware

Above the hardware layer sits the software middleware — the framework that lets different software modules communicate with each other. In the robotics world, this means the Robot Operating System, or ROS.

Despite its name, ROS is not an operating system in the traditional sense. It's a collection of tools, libraries, and conventions that provide a standardised way for different parts of a robot's software stack to exchange data. A camera driver can publish image frames, a perception module can subscribe to those frames and publish detected objects, and a planning module can subscribe to the object list and publish movement commands — all without any of these modules needing to know the internal details of the others.

The original ROS (now called ROS 1) was developed at Willow Garage in 2007 and became the de facto standard in academic robotics research. However, it had significant limitations for real-world deployment: it relied on a single central node to coordinate communications, had no built-in security, and wasn't designed for real-time operation.

ROS 2, the current generation, addresses these shortcomings. It replaces ROS 1's custom communication layer with DDS (Data Distribution Service), an industrial-grade middleware standard originally developed for defence and critical infrastructure. DDS uses a decentralised publish-subscribe architecture — there's no single master node whose failure would bring down the entire system. It also provides configurable Quality of Service (QoS) policies, so developers can assign high-reliability, low-latency settings to safety-critical joint commands while using lighter best-effort delivery for less time-sensitive data like camera feeds.

ROS 2 is now widely adopted across the humanoid robotics industry. It serves as the integration layer where high-level AI reasoning, computer vision, motion planning, and low-level motor control all come together. The NVIDIA Isaac robotics platform, used by companies including Agility Robotics, Boston Dynamics, and XPENG Robotics, integrates directly with ROS 2, as do most modern humanoid development environments.

External Communication: Connecting to the World

A humanoid robot also needs to communicate with the world beyond its own body. This includes links to human operators, cloud computing resources, other robots, and facility infrastructure like doors, lifts, and safety systems.

Wi-Fi

Standard Wi-Fi remains the most common external communication method for humanoid robots operating in indoor environments like factories, warehouses, and research labs. It offers high bandwidth (sufficient for streaming multiple video feeds), broad compatibility with existing infrastructure, and no per-device connectivity costs. The main limitations are range, potential interference in dense industrial environments, and latency that can be unpredictable under heavy network load.

5G and Cellular

For robots operating over larger areas or requiring more reliable connectivity, 5G cellular networks offer compelling advantages. 5G's low-latency capabilities (potentially under 10 milliseconds round-trip in ideal conditions) and high bandwidth make it suitable for real-time video streaming, cloud-based AI processing, and remote teleoperation. Several research programmes have explored using 5G to enable cloud-edge-terminal architectures where computationally intensive tasks like large language model inference run on remote servers while the robot handles time-critical control locally.

The trade-off is infrastructure dependency: 5G requires network coverage, a SIM card and data plan, and introduces a reliance on external network operators. For indoor deployment, many companies are exploring private 5G networks as an alternative to public cellular service.

Bluetooth and Short-Range Protocols

Bluetooth and Bluetooth Low Energy (BLE) see limited use in humanoid robots, typically for peripheral connections like handheld operator controllers or diagnostic tools. Their low bandwidth and limited range make them unsuitable for primary communication, but they serve a niche role in setup, configuration, and low-data-rate accessories.

Cloud Robotics and Edge Computing

One of the most significant trends in humanoid robot communication is the shift toward a cloud-edge-robot architecture. The core idea is straightforward: not everything needs to be computed on the robot itself.

Modern humanoid robots carry powerful onboard processors — NVIDIA's Jetson Thor platform, for example, is designed specifically for humanoid applications and delivers the compute needed for real-time perception, motor control, and on-device AI inference. But some tasks benefit from, or require, off-robot computing resources.

Cloud computing is used for training AI models on data collected from deployed robot fleets, running large foundation models that are too resource-intensive for onboard hardware, performing large-scale simulation, and aggregating operational data across many robots for fleet-wide learning. When a fleet of humanoid robots works in a warehouse, data from every unit can feed back into shared cloud models, making the collective fleet progressively more capable over time.

Edge computing — processing data on local servers close to the robot rather than in a distant data centre — provides a middle ground. An edge server in the same building can run heavier AI workloads with much lower latency than a remote cloud, while still offloading computation from the robot's limited onboard power and thermal budget. NVIDIA's OSMO framework, for instance, is designed to simplify robot training workflows across edge and cloud infrastructure.

The critical design question is always: what must be computed on the robot (for safety and latency reasons), what can be offloaded to a nearby edge server, and what belongs in the cloud? Safety-critical functions like balance control and collision avoidance must run onboard with guaranteed timing. Higher-level planning, learning, and analytics can tolerate the latency of edge or cloud connections.

Multi-Robot Communication

As humanoid robots move from single-unit research platforms to fleet deployments in factories and warehouses, robot-to-robot communication becomes increasingly important. Multiple robots sharing a workspace need to coordinate their movements, avoid collisions with each other, negotiate access to shared resources (doorways, corridors, charging stations), and collaborate on tasks.

The ROS 2 ecosystem includes the Robotics Middleware Framework (RMF), a collection of tools specifically designed for multi-robot coordination. RMF provides standardised interfaces for fleet management, traffic negotiation, and interoperability between robots from different manufacturers — a practical necessity as few real-world facilities will source all their robots from a single vendor.

At a lower level, multi-robot communication can use direct peer-to-peer links (robots talking to each other over Wi-Fi or local networks) or a centralised approach where a fleet management server mediates all coordination. The choice depends on the deployment scale, reliability requirements, and the level of autonomy expected from individual robots.

Human-Robot Communication

There is another dimension to humanoid robot communication that goes beyond data protocols: how the robot communicates with people.

Modern humanoid robots increasingly support natural language interaction, enabled by large language models and vision-language models running either onboard or via cloud services. Figure AI's partnership with OpenAI, for example, produced systems where a robot can receive spoken instructions, interpret them using a vision-language model that simultaneously processes camera feeds, and respond with spoken confirmations — a far cry from the scripted command interfaces of earlier generations.

Beyond speech, humanoid robots communicate through body language, gesture, gaze direction, and even facial expressions on platforms that include articulated faces. These non-verbal channels are particularly important in collaborative settings where a robot works alongside humans. A robot that clearly signals its intended movement direction, or pauses and looks toward a human before proceeding through a shared space, is far safer and more intuitive to work with than one that moves without social cues.

Security and Safety

As humanoid robots become more connected, communication security becomes a serious concern. A robot with cameras, microphones, and network connectivity operating in a home, hospital, or factory is a potential vector for data breaches, surveillance, or even physical harm if its control systems are compromised.

ROS 2's adoption of DDS brought built-in support for encrypted communication, authentication, and access control — features that were entirely absent in ROS 1. At the fieldbus level, protocols like EtherCAT support safety extensions (such as Fail Safe over EtherCAT, or FSoE) that maintain safe operation even during communication failures.

Ongoing industry work on safety standards, including extensions to the existing ISO collaborative robot standards, will increasingly define requirements for secure and reliable robot communication in deployment environments.

What's Next

Several trends are shaping the near future of humanoid robot communication:

- Higher-bandwidth internal buses. EtherCAT G (Gigabit EtherCAT) extends the current 100 Mbit/s standard to gigabit speeds, accommodating the growing data demands of high-resolution tactile skins, multiple onboard cameras, and more degrees of freedom in dexterous hands.

- Tighter cloud integration. As foundation models for robotics grow larger and more capable, the pipeline between cloud training and on-robot deployment will become more seamless, with over-the-air model updates and continuous learning from fleet data becoming routine.

- Private 5G and Wi-Fi 7. Purpose-built wireless infrastructure in factories and warehouses will provide the deterministic, low-latency connectivity that humanoid fleets require, reducing dependence on public networks.

- Standardisation pressure. As multi-vendor robot deployments become the norm, industry pressure for standardised communication interfaces — building on ROS 2, RMF, and emerging interoperability frameworks — will intensify.

- On-device AI growth. Paradoxically, better communication may reduce communication needs. As onboard processors become more powerful (NVIDIA's Jetson roadmap is one indicator), more AI inference will move to the robot itself, reducing reliance on cloud links for routine operation and reserving external connectivity for training, updates, and fleet coordination.

The Bottom Line

Communication is the invisible infrastructure that makes everything else in a humanoid robot possible. The most sophisticated AI, the most precise actuators, and the most advanced sensors are useless if the data connecting them can't flow reliably, quickly, and securely. As humanoid robots move from lab demonstrations to real-world deployment at scale, the communication systems binding them together — internally, to each other, and to the broader digital world — will be just as important as the hardware and AI that get the headlines.

This article is part of Droid Brief's Resources section. For more foundational topics, see our Actuators & Motors, Sensors & Perception, and AI & The Robot Brain deep dives.