Humanoid robotics sits at the intersection of mechanical engineering, artificial intelligence, neuroscience, and computer science — which means it comes with a lot of jargon. This glossary defines the terms you'll encounter most often when reading about humanoid robots, explained in plain language with no prior knowledge assumed.

Terms are organised by category rather than alphabetically, so you can read through the section most relevant to what you're trying to understand. If you're looking for a specific word, use your browser's search function (Ctrl+F or Cmd+F) to jump straight to it.

The Basics

Humanoid Robot — A robot designed with a body plan that resembles the human form: a head, torso, two arms, and two legs. The key feature is the body layout, not how realistic it looks. Most humanoid robots look unmistakably like machines.

Android — A humanoid robot designed to look as much like a real human as possible, with realistic skin, hair, facial features, and natural movement. All androids are humanoid robots, but most humanoid robots are not androids. The term is technically gender-neutral, though it derives from the Greek word for "man-like."

Gynoid — A humanoid robot designed to resemble a human female. Less commonly used than "android," which in practice tends to be applied regardless of gender presentation.

Bipedal — Walking on two legs. Bipedal locomotion is one of the defining characteristics of humanoid robots, and one of the hardest engineering problems in robotics.

Autonomous — Capable of operating without direct human control. In practice, autonomy exists on a spectrum. A fully autonomous humanoid robot would sense its environment, make decisions, and act without any human intervention. Most current humanoid robots have partial autonomy — they can perform specific tasks independently but require human oversight or instruction for others.

Teleoperation — Direct, real-time control of a robot by a human operator, typically using a remote controller, VR headset, or motion-capture suit. Several current humanoid robot demonstrations rely on teleoperation rather than full autonomy, which is an important distinction when evaluating what a robot can actually do on its own.

Robot-as-a-Service (RaaS) — A business model where companies lease robots and related services to customers on a subscription or usage basis rather than selling them outright. This is emerging as the primary commercial model for early humanoid robot deployments.

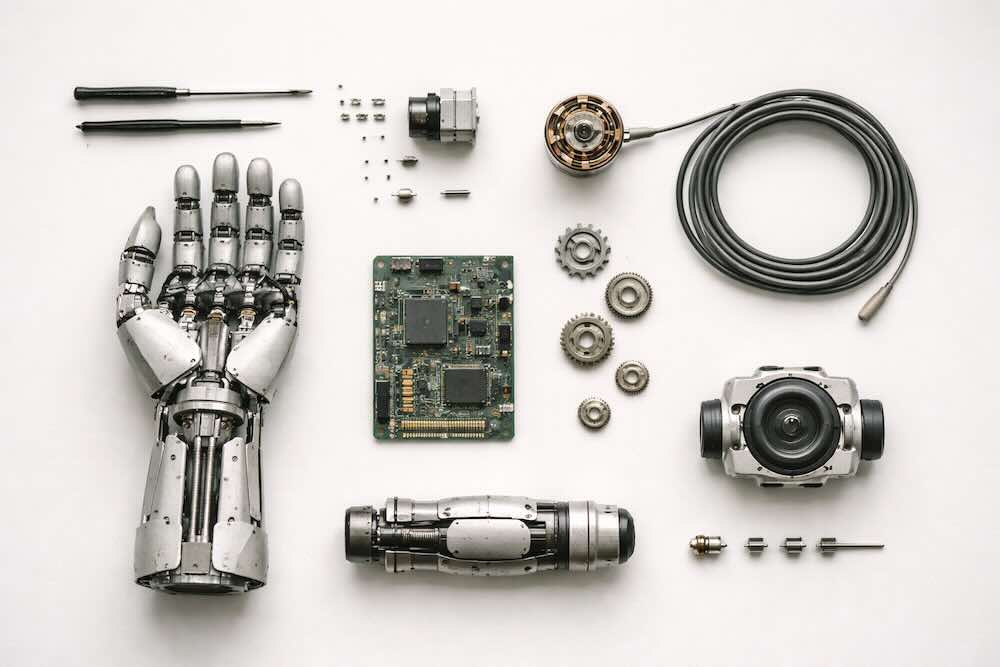

Body & Mechanical Systems

Actuator — Any device that converts energy into physical movement. Actuators are the muscles of a robot. In humanoid robots, they're typically electric motors, hydraulic pistons, or pneumatic systems that power each joint. The type, power, and efficiency of a robot's actuators are among the most important factors determining what it can physically do.

Degrees of Freedom (DOF) — The number of independent axes of movement a robot has. Each joint that can rotate or slide in one direction counts as one degree of freedom. A simple hinge (like your elbow) has one DOF. Your shoulder, which can rotate in multiple directions, has three. The human body has over 200 DOF. Most current humanoid robots have between 20 and 56, concentrated in the joints that matter most for practical tasks.

End Effector — Whatever is attached to the end of a robot's arm. In humanoid robots, this is typically a hand or gripper. In industrial robots, it might be a welding torch, drill, or suction cup. The end effector is the part that actually interacts with the world.

Gripper — A simplified mechanical hand designed to grasp objects. Grippers range from simple two-finger pinchers to more complex multi-finger designs. Many humanoid robots use grippers rather than fully articulated hands because they're cheaper, more reliable, and adequate for many tasks.

Dexterous Manipulation — The ability to handle objects with skill and precision, particularly using fingers to rotate, reposition, and control items in the hand. Human-level dexterous manipulation remains one of the greatest unsolved challenges in humanoid robotics.

Payload — The maximum weight a robot can carry or lift. For humanoid robots, this is usually specified for the arms (how much it can carry in its hands) and sometimes for the overall body (how much it can transport while walking).

Torque — A rotational force. When a robot's motor turns a joint, the amount of force it can apply is measured as torque. High torque means the robot can exert more force — important for lifting heavy objects or maintaining balance on uneven ground.

Compliance — The ability of a robot's joints or body to yield or flex in response to external forces, rather than being completely rigid. Compliant robots are safer to work around because they absorb impacts rather than resisting them. "Series Elastic Actuators" (SEAs) are a common way of building compliance into robot joints.

Series Elastic Actuator (SEA) — A type of actuator that includes a spring element between the motor and the joint. The spring absorbs shocks, provides force sensing, and makes the robot's movements more natural and safer for interaction with humans and fragile objects.

Quasi-Direct Drive Actuator — A type of actuator that uses a low-ratio gearbox, offering a compromise between the high force of geared motors and the responsiveness of direct-drive motors. Increasingly popular in modern humanoid robots because they allow fast, dynamic movements while maintaining reasonable force output.

Hydraulic — Powered by pressurised fluid. Hydraulic actuators can generate enormous force relative to their size, which is why Boston Dynamics' original Atlas used them. The downsides are noise, maintenance complexity, potential for leaks, and difficulty in precise control. Most new humanoid robots are moving to electric actuators.

Soft Robotics — A field of robotics that uses flexible, deformable materials instead of rigid structures. Soft robotic techniques are increasingly being incorporated into humanoid robot hands and grippers to enable gentler, more adaptive grasping.

Artificial Muscle — A broad term for actuator technologies that aim to mimic the properties of biological muscle — contracting, expanding, and flexing rather than rotating like a motor. Various approaches are under development including pneumatic, electroactive polymer, and shape-memory alloy muscles. None have yet reached commercial viability in humanoid robots, but they represent a potential step-change in capability.

Sensors & Perception

LiDAR (Light Detection and Ranging) — A sensor that fires laser pulses and measures how long they take to bounce back, building a precise 3D map of the surrounding environment. Used in humanoid robots for navigation and obstacle avoidance.

IMU (Inertial Measurement Unit) — A sensor package that typically combines accelerometers and gyroscopes to measure a robot's orientation, acceleration, and rotational movement. The robotic equivalent of the human inner ear — essential for balance and body awareness.

Force/Torque Sensor — A sensor that measures the forces and rotational forces being applied to or by a robot's joints or end effector. Critical for tasks that require controlled contact — picking up a fragile object without crushing it, or detecting when you've bumped into something.

Tactile Sensor — A sensor designed to mimic the sense of touch, detecting pressure, texture, slip, or temperature on a robot's surface. Tactile sensing in humanoid robot hands is an active area of research and a key enabler of more human-like dexterous manipulation.

Proprioception — The sense of one's own body position and movement. In robotics, proprioception refers to the robot's internal awareness of where its limbs are, what angle its joints are at, and how its body is oriented in space. This information comes from encoders in joints, IMUs, and force sensors, and is essential for coordinated movement and balance.

Computer Vision — The field of AI that enables machines to interpret and understand visual information from cameras. In humanoid robots, computer vision is used for object recognition (what is that?), spatial understanding (where is it?), navigation, face recognition, gesture reading, and much more.

Depth Camera — A camera that captures not just colour images but also distance information for every pixel, producing a 3D representation of the scene. Common types include stereo cameras (which use two lenses, like human eyes) and structured-light or time-of-flight sensors. Widely used in humanoid robots for spatial awareness.

SLAM (Simultaneous Localisation and Mapping) — A technique that allows a robot to build a map of an unknown environment while simultaneously tracking its own position within that map. Essential for any robot navigating spaces it hasn't been pre-programmed to understand.

Sensor Fusion — The process of combining data from multiple different sensors (cameras, LiDAR, IMUs, force sensors, etc.) to build a more accurate and reliable understanding of the world than any single sensor could provide alone.

Movement & Control

Locomotion — The means by which a robot moves from place to place. For humanoid robots, this primarily means bipedal walking, but can also include running, climbing stairs, crouching, and navigating uneven terrain.

Gait — The pattern of movement used for walking or running. Humans have distinctive gaits; so do humanoid robots. Developing a stable, energy-efficient, natural-looking gait is one of the core challenges of humanoid robotics.

Zero Moment Point (ZMP) — A foundational concept in bipedal robot balance. The ZMP is the point on the ground where the total rotational force (moment) from gravity and the robot's motion equals zero. If the ZMP stays within the robot's footprint, the robot remains stable. If it moves outside the footprint, the robot falls. First developed by Serbian researcher Miomir Vukobratović in the 1960s, ZMP theory underpinned most bipedal walking robots for decades.

Dynamic Balance — The ability to maintain stability while moving. Unlike static balance (standing still), dynamic balance requires constant, active adjustment — shifting weight, repositioning limbs, compensating for momentum. Walking is inherently a process of dynamic balance: each step is a controlled fall, caught by the next footfall.

Whole-Body Control — A control approach that coordinates all of a robot's joints simultaneously, treating the entire body as a single integrated system rather than controlling the arms, legs, and torso separately. Essential for complex tasks like carrying an object while walking over uneven ground.

Inverse Kinematics — The mathematical process of calculating what angles each joint needs to be at in order to place the robot's hand (or foot, or any other part) at a specific point in space. If you know where you want the hand to go, inverse kinematics figures out how to get it there.

Motion Planning — The process of computing a path and movement sequence for a robot to get from one configuration to another while avoiding obstacles, respecting joint limits, and maintaining balance. A robot reaching for an object on a shelf, for example, needs to plan a path for its arm that doesn't collide with other objects or the robot's own body.

Control Loop — The continuous cycle of sensing, processing, and acting that governs a robot's behaviour. A fast, well-tuned control loop is what allows a humanoid robot to maintain balance, adjust to disturbances, and execute smooth movements. These loops typically run hundreds or thousands of times per second.

PID Controller — A type of feedback controller (Proportional-Integral-Derivative) that is one of the most common and fundamental control mechanisms in robotics. It continuously adjusts output (like motor speed) to minimise the error between the desired state and the actual state.

Odometry — The use of sensor data (wheel encoders, IMUs, visual cues) to estimate a robot's change in position over time. Essentially, the robot's way of tracking where it's been and how far it's moved.

Artificial Intelligence & Learning

Reinforcement Learning (RL) — A type of machine learning where an AI agent learns by trial and error, receiving rewards for good outcomes and penalties for bad ones. In humanoid robotics, RL is used to train robots to walk, manipulate objects, and perform tasks without being explicitly programmed for every motion. The robot tries thousands or millions of variations and gradually discovers what works.

Imitation Learning — A technique where a robot learns to perform tasks by watching demonstrations, typically by a human. Instead of learning from scratch through trial and error, the robot observes how the task is done and attempts to replicate the behaviour. Also called "learning from demonstration" (LfD).

Sim-to-Real Transfer — The process of training a robot in a simulated virtual environment and then deploying the learned skills on a real, physical robot. Training in simulation is vastly faster and safer than training on real hardware, but the gap between simulation and reality (the "sim-to-real gap") is a significant challenge. Bridging it is one of the most active areas of research in humanoid robotics.

Foundation Model — A large AI model trained on broad, diverse data that can be adapted to many different tasks. In the context of humanoid robotics, foundation models are being developed that combine vision, language understanding, and physical action — enabling robots to understand instructions, perceive their environment, and plan movements within a single integrated system.

VLA Model (Vision-Language-Action) — A type of foundation model specifically designed for robotics that combines visual perception, natural language understanding, and physical action generation. A VLA model can potentially see what's in front of it, understand a spoken instruction ("pick up the red box and put it on the shelf"), and generate the motor commands to do it.

Large Language Model (LLM) — An AI model trained on vast amounts of text data, capable of understanding and generating natural language. In humanoid robotics, LLMs are being integrated to enable robots to understand verbal instructions, reason about tasks, and communicate with humans in natural language. Examples include OpenAI's GPT series and similar models.

Neural Network — A computing architecture inspired by the structure of the human brain, consisting of interconnected layers of artificial neurons. Neural networks are the foundation of most modern AI, including the perception, decision-making, and control systems in current humanoid robots.

Computer Vision (in AI context) — The application of neural networks and other AI techniques to interpret images and video. In humanoid robots, AI-powered computer vision enables object recognition, scene understanding, human pose estimation, and visual navigation.

End-to-End Learning — An approach where a single neural network learns to map directly from raw inputs (like camera images) to outputs (like motor commands) without breaking the problem into separate hand-designed stages. In humanoid robotics, end-to-end learning aims to create systems where the robot learns the entire process from perception to action as one integrated skill.

Policy (in RL) — In reinforcement learning, a policy is the strategy the robot has learned — essentially the set of rules that maps from what the robot perceives to what action it should take. When researchers say they're "training a locomotion policy," they mean they're training the robot's walking strategy.

Generalisation — The ability of a trained AI model or robot to perform well in situations it hasn't seen before. A robot trained to pick up mugs in a lab needs to generalise to picking up mugs of different shapes, sizes, and positions in a real kitchen. Generalisation is one of the biggest current challenges in robotics AI.

Embodied AI — Artificial intelligence that operates within and through a physical body, interacting with the real world through sensors and actuators. The idea that intelligence may require physical embodiment — that a robot with a body can develop forms of understanding that a disembodied AI running on a server cannot. Humanoid robots are a key platform for embodied AI research.

Simulation & Training

Digital Twin — A virtual replica of a physical robot or environment, kept in sync with the real world through sensor data. Digital twins allow engineers to test changes, predict behaviour, and train AI in a virtual copy before applying it to the real robot.

Physics Simulator — Software that models the physical behaviour of objects — gravity, friction, collisions, joint dynamics — allowing robots to be trained and tested in virtual environments. Major simulators used in humanoid robotics include NVIDIA Isaac Sim, MuJoCo, and PyBullet.

NVIDIA Isaac Sim — A robotics simulation platform developed by NVIDIA that uses GPU-accelerated physics and photorealistic rendering to create virtual training environments for robots. Widely used in the humanoid robotics industry for sim-to-real training.

MuJoCo (Multi-Joint Dynamics with Contact) — A physics engine designed for simulating complex multi-body systems like humanoid robots. Originally developed by Emo Todorov at the University of Washington, now open-source and owned by Google DeepMind. A standard tool in robotics research.

Domain Randomisation — A technique used in sim-to-real transfer where the simulation parameters (lighting, textures, physics properties, object sizes) are deliberately varied during training so that the robot's learned skills are robust enough to work in the messier, less predictable real world.

Software & Infrastructure

ROS (Robot Operating System) — An open-source framework that provides tools, libraries, and conventions for writing robot software. Despite its name, ROS is not a traditional operating system but a middleware layer that helps different parts of a robot's software communicate. ROS 2 is the current version, designed for commercial and safety-critical applications.

Middleware — Software that sits between a robot's operating system and its applications, handling communication between different modules (perception, planning, control). ROS is the most widely used middleware in robotics.

OTA Update (Over-the-Air Update) — Wirelessly delivered software updates that can add new capabilities or improve existing ones without physical modification. Several humanoid robot companies, notably those following Tesla's automotive model, are planning to improve their robots continuously through OTA updates after deployment.

Edge Computing — Processing data locally on or near the robot, rather than sending it to a remote cloud server. Edge computing reduces latency (delay) and allows faster responses, which is critical for real-time balance, obstacle avoidance, and other time-sensitive tasks.

Cloud Robotics — An approach where some of a robot's computation, storage, and learning is offloaded to remote cloud servers. Useful for tasks that require large-scale processing (like training AI models) but don't need split-second response times.

Fleet Learning — A concept where multiple robots in a fleet share their experiences and learning with each other, so that a skill learned by one robot is automatically available to all. Tesla's approach to autonomous driving uses this principle, and it's being extended to their Optimus humanoid programme.

Industry & Standards

Cobot (Collaborative Robot) — A robot designed to work safely alongside humans in a shared workspace, without the need for safety cages or barriers. Originally applied to industrial robot arms, the term is increasingly relevant to humanoid robots, which are inherently designed for human environments.

ISO 10218 — The international safety standard for industrial robots, specifying requirements for safe design, protective measures, and information for use. As humanoid robots move into commercial settings, compliance with this and related standards is becoming essential.

ISO/TS 15066 — A technical specification for collaborative robot systems, defining safety requirements for robots working in shared spaces with humans. Particularly relevant for humanoid robots operating in factories, warehouses, and other workplaces.

DARPA (Defense Advanced Research Projects Agency) — A research agency of the US Department of Defense that has funded some of the most significant humanoid robotics programmes in history, including the Atlas robot and the DARPA Robotics Challenge. Often described as the organisation where the most ambitious — and most generously funded — robotics research begins.

Total Addressable Market (TAM) — An economic term you'll encounter frequently in humanoid robotics reporting. It refers to the total revenue opportunity available if a product achieves 100% market share. For humanoid robots, industry analysts often cite the global market for physical labour as the TAM — a figure in the tens of trillions of dollars, which explains the scale of investment flowing into the sector.

Concepts & Phenomena

Uncanny Valley — A concept proposed by Japanese roboticist Masahiro Mori in 1970 suggesting that as a robot's appearance becomes more human-like, people's comfort increases — until the robot is almost but not quite convincingly human, at which point comfort drops sharply into unease or revulsion. This "valley" of negative response explains why most commercial humanoid robots are designed to look clearly mechanical rather than attempting photorealistic human appearance.

Form Factor — The overall shape and physical configuration of a robot. In humanoid robotics discussions, "form factor" is used to describe the body plan — bipedal humanoid vs. wheeled, quadruped, or other configurations — and the advantages and disadvantages each brings.

Drop-In Replacement — The concept that a humanoid robot could be deployed into a workspace or environment designed for humans without needing to modify that environment. This is one of the central commercial arguments for the humanoid form factor.

Sim-to-Real Gap — The difference between how a robot performs in a simulated environment and how it performs in the real world. Simulation can't perfectly model every aspect of physical reality — friction, lighting, material properties, unexpected objects — and skills that work flawlessly in simulation may fail in practice. Closing this gap is a major research challenge.

The "Last Mile" Problem — Borrowed from logistics, this term in humanoid robotics refers to the challenge of going from a robot that works well in controlled demonstrations to one that works reliably in the messy, unpredictable conditions of real-world deployment. The "last mile" between 95% reliability and 99.9% reliability often requires more engineering effort than the first 95%.

Moravec's Paradox — The observation, named after roboticist Hans Moravec, that tasks which are easy for humans (like walking, picking up objects, recognising faces) are extremely hard for robots, while tasks that are hard for humans (like complex mathematics or chess) are relatively easy for computers. This paradox explains why humanoid robots — which must perform "easy" physical tasks — remain so challenging to build.

Embodiment — The principle that having a physical body fundamentally changes how an intelligent agent interacts with and understands the world. In robotics and AI philosophy, embodiment suggests that some forms of intelligence can only arise through physical interaction with the environment, which is a key argument for building humanoid robots rather than pursuing intelligence through software alone.

This glossary is a living document. As the humanoid robotics field evolves, new terms will emerge and existing ones will shift in meaning. We'll update this page regularly to keep pace. If you've encountered a term in Droid Brief's coverage that isn't listed here, let us know.

Stay with Droid Brief to follow every step of the humanoid robotics journey.