How simulation is accelerating humanoid robot development — and why bridging the gap between virtual and real remains one of robotics' greatest challenges.

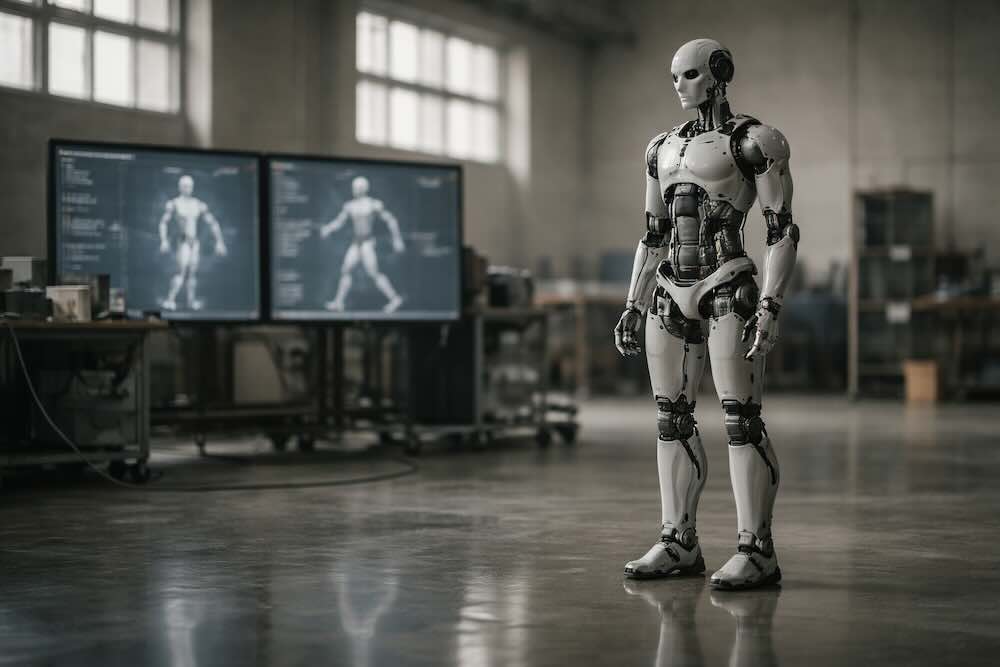

Every humanoid robot that walks, grasps, or balances in the real world has almost certainly practised first in a virtual one. Simulation has become the engine room of modern robotics development — a place where machines can fall over millions of times, break things without consequence, and accumulate years of experience in hours. The challenge, however, is that simulation is not reality. Surfaces feel different. Lighting shifts. Joints have imperfections that no model fully captures. Bridging this divide — known as sim-to-real transfer — is one of the most important and active frontiers in humanoid robotics today.

This article explains what sim-to-real transfer is, why it matters so profoundly for humanoid robots, how the leading simulation platforms work, and what techniques researchers and companies use to close the gap between a virtual training ground and the messy, unpredictable physical world.

Why Simulate at All?

Training a robot directly in the real world is expensive, slow, and dangerous. A humanoid learning to walk will fall — repeatedly. A hand learning to grasp will drop, crush, or fling objects. Every collision risks damaging hardware that may cost tens or hundreds of thousands of pounds. And even under ideal conditions, physical training runs at the speed of real time: an hour of practice takes an hour to complete.

Simulation changes these economics entirely. Inside a physics engine, a robot can be duplicated thousands of times, each copy running in parallel on GPU hardware. Falls cost nothing. Broken joints are reset with a line of code. And because simulated physics can run faster than real time — often many times faster — a single day of computation can yield the equivalent of months or years of physical practice.

This is not a theoretical advantage. When NVIDIA demonstrated its Isaac GR00T Blueprint for synthetic motion generation in 2025, the company reported generating 780,000 synthetic training trajectories — equivalent to roughly nine continuous months of human demonstration data — in just eleven hours. Combining that synthetic data with real-world demonstrations improved the performance of its humanoid foundation model by 40 per cent compared to using real data alone.

For humanoid robots in particular, simulation is not merely convenient — it is practically essential. The bipedal walking problem alone involves dozens of joints coordinating in real time, with balance depending on subtle interactions between foot contact, ground friction, body momentum, and actuator response. Training a walking policy from scratch on a physical robot would be prohibitively slow and would risk serious mechanical damage. Simulation allows developers to explore the vast space of possible gaits, terrains, and failure modes in a safe, parallelised environment before ever powering on a real machine.

The Reality Gap

If simulation were a perfect replica of the physical world, sim-to-real transfer would be trivial: train in simulation, deploy on hardware, job done. In practice, it is nothing of the sort. The discrepancy between simulated and real-world conditions — commonly called the reality gap — is the central obstacle in this entire field.

The gap manifests in several ways. Physics models are approximations: they simplify contact dynamics, friction, deformation, and inertia in ways that may not match the behaviour of a specific robot in a specific environment. Sensor noise in the real world — jitter in cameras, drift in inertial measurement units, latency in force sensors — is difficult to replicate faithfully. Actuators do not respond with the precision their specifications suggest: motors have backlash, cables stretch, and gears wear over time. Even lighting conditions, which affect computer vision systems, differ between a rendered scene and a factory floor.

The result is that a policy trained to perfection in simulation can fail dramatically on real hardware. A walking gait that is stable in a simulator may stumble on an actual surface. A grasping strategy that works with idealised finger contacts may drop objects when confronted with the unpredictable friction of a real fingertip on a real surface. This is not a minor engineering detail — it is a fundamental challenge that has shaped the direction of robotics research for over a decade.

The Major Simulation Platforms

The sim-to-real pipeline begins with the simulator itself, and a small number of platforms now dominate the landscape for humanoid robot development.

NVIDIA Isaac Sim and Isaac Lab

NVIDIA's Isaac Sim is a GPU-accelerated robotics simulation platform built on the company's Omniverse infrastructure. It provides high-fidelity physics through NVIDIA PhysX, supports multi-sensor rendering at scale, and is designed to integrate directly with reinforcement learning and imitation learning workflows. Isaac Lab, an open-source framework built on top of Isaac Sim, is specifically optimised for robot learning at scale — supporting thousands of parallel environments running simultaneously on GPU hardware.

Isaac Sim has become a central platform for many of the major humanoid robot developers. Boston Dynamics uses Isaac Lab for training policies that can be deployed directly onto hardware. Fourier, the maker of the GR-1 and GR-2 humanoids, has ported its reinforcement learning workflows to Isaac Lab. The platform's integration with NVIDIA's broader ecosystem — including the GR00T foundation model for humanoids and the Cosmos world foundation models for synthetic data generation — makes it a comprehensive pipeline from simulation through to deployment.

The release of Isaac Sim 5.0 in mid-2025 brought further advances in physics realism, including improved sensor customisation, enhanced synthetic motion generation, and benchmarking tools. The platform now supports import from standard robot description formats including URDF and MJCF, making it accessible to teams working with a range of robot hardware.

MuJoCo

MuJoCo — short for Multi-Joint Dynamics with Contact — has been one of the most widely used physics engines in robotics research since its introduction in 2012. Originally developed at the University of Washington, it was acquired by Google DeepMind in 2021 and released as free, open-source software under the Apache 2.0 licence in 2022.

MuJoCo's strength lies in its contact model, which provides an efficient and accurate treatment of the complex interactions that occur when rigid bodies touch — a critical capability for simulating robotic manipulation and locomotion. It resolves contact forces using a convex optimisation approach that avoids the shortcuts common in gaming engines, which may sacrifice accuracy for stability. This makes it particularly well suited to tasks where the fidelity of contact physics directly affects policy quality.

In 2025, DeepMind and NVIDIA jointly announced MuJoCo-Warp, a GPU-accelerated version of MuJoCo designed to run on NVIDIA hardware. Early benchmarks suggest it can accelerate machine learning workloads by more than 70 times compared to CPU-based simulation. MuJoCo-Warp is being integrated into the Newton physics engine — a collaboration between NVIDIA, Google DeepMind, and Disney Research — which aims to become a unified, open-source engine optimised specifically for robot learning.

Other Notable Platforms

Several other simulation environments play important roles in the ecosystem. Gazebo, tightly integrated with the Robot Operating System (ROS 2), is widely used in academic settings and for mobile robot development. PyBullet, based on the Bullet physics engine, offers a lightweight and accessible option for rapid prototyping. Unity and Unreal Engine, originally designed for games and visual effects, are increasingly used for generating visually realistic training data — particularly for vision-based policies where photorealism matters more than physics fidelity.

Techniques for Bridging the Gap

Because no simulator perfectly replicates reality, researchers have developed a range of techniques to make policies more robust when transferred from simulation to the physical world. These approaches fall into several broad categories.

Domain Randomisation

Domain randomisation is perhaps the most influential and widely adopted technique in sim-to-real transfer. The core idea is disarmingly simple: rather than trying to make the simulation perfectly match reality, you make it deliberately imperfect in many different ways. By training a policy across a wide range of randomised conditions — varying friction coefficients, object masses, joint damping, actuator delays, lighting conditions, camera positions, and more — the resulting policy learns to be robust to variation itself. When deployed in the real world, the physical environment is simply treated as one more variation within the training distribution.

This approach was brought to widespread attention by OpenAI's Dactyl project in 2018, which used a Shadow Dexterous Hand to manipulate objects with remarkable human-like dexterity. The system was trained entirely in simulation using MuJoCo, with extensive randomisation of physical parameters — object mass, finger friction, joint damping, actuator forces, and many others. The resulting policy transferred directly to a real Shadow Hand without any fine-tuning. In 2019, the team extended this to solve a Rubik's Cube using an advanced version they called Automatic Domain Randomisation (ADR), which progressively increased the diversity and difficulty of the randomised environments throughout training.

Domain randomisation is now standard practice across the humanoid robotics industry. Tesla's Optimus programme uses reinforcement learning in simulation with domain randomisation to train walking gaits, and the company has publicly described its sim-to-real transfer pipeline as a core part of its development approach. The technique's appeal lies in its generality: it does not require detailed knowledge of the real-world parameters, only the ability to vary them across a plausible range.

System Identification

System identification takes the opposite approach to domain randomisation. Instead of training across a broad range of conditions, it attempts to make the simulator match the real world as precisely as possible. This involves measuring the physical properties of the actual robot — joint friction, motor response curves, link masses, centre-of-gravity positions — and calibrating the simulator to replicate those properties.

Research from 2025 suggests that system identification and domain randomisation are not mutually exclusive but complementary. For parameters that can be accurately measured — such as mass and inertia — system identification tends to outperform randomisation, because introducing unnecessary variation can actually make training harder without improving transfer. For parameters that are difficult to measure precisely — such as thrust coefficients in aerial robots or contact friction in manipulation — domain randomisation remains more effective. The best-performing sim-to-real pipelines typically combine both: calibrating what can be measured, randomising what cannot.

Domain Adaptation

Domain adaptation uses machine learning to directly bridge the gap between simulated and real observations. Rather than modifying the simulator, it modifies the policy or the data to account for the difference. One common approach trains a neural network to transform simulated images into more realistic versions — or, equivalently, to extract features from both simulated and real images that are invariant to the visual differences between them.

Generative adversarial networks (GANs) have been used to translate rendered simulation frames into photorealistic images, allowing vision-based policies to operate in the real world without retraining. More recently, diffusion models have been applied to the same problem, transforming simulated perception data into realistic counterparts in a compressed latent space. A 2025 study demonstrated that this approach could improve autonomous driving transfer metrics by over 40 per cent.

In the context of humanoid robots, domain adaptation is particularly relevant for vision-based control, where the appearance gap between a rendered scene and a real camera feed can be substantial. Techniques that learn domain-invariant representations — features that capture the task-relevant structure of a scene regardless of whether it is simulated or real — are an active and promising area of research.

Sim-and-Real Co-Training

A more recent approach blends simulated and real-world data during training, rather than training exclusively in simulation and transferring afterwards. This co-training strategy uses large volumes of simulated data — which is cheap and parallelisable — supplemented by a smaller number of real-world demonstrations that anchor the policy to actual physical conditions.

Research presented at NeurIPS 2025 demonstrated a framework that uses optimal transport methods to align the joint distributions of observations and actions across simulated and real domains. The key insight is that aligning actions alongside observations provides a richer training signal than aligning visual observations alone. Policies trained with this approach successfully generalised to real-world scenarios — including long-horizon tasks like sorting objects into a closed drawer — from as few as 25 real demonstrations, with the rest of the training data coming from simulation.

Synthetic Data Generation

Beyond training control policies, simulation plays a critical role in generating training data for the perception and reasoning components of humanoid robots. Synthetic data — rendered images, depth maps, point clouds, and semantic annotations generated in simulation — can be produced at enormous scale with perfect ground-truth labels, something that is prohibitively expensive to obtain in the real world.

NVIDIA's Cosmos world foundation models, paired with Isaac Sim, represent the current state of the art in this area. The system can generate photorealistic synthetic video from 3D simulation scenes, providing training data for perception models that would otherwise require extensive manual annotation. The ability to vary lighting, textures, object placement, and camera angles programmatically means that a single simulated scene can yield millions of unique training examples.

Sim-to-Real in Practice: How Humanoid Companies Use Simulation

The major humanoid robot developers have each built sim-to-real pipelines that reflect their particular hardware, target applications, and AI strategies.

Tesla has been the most vocal about its simulation-first approach to Optimus. The company uses reinforcement learning in simulation to develop locomotion policies, which are then transferred to the physical robot using what it describes as a zero-shot sim-to-real pipeline — meaning the policy runs on real hardware without additional fine-tuning. Tesla's VP of AI Software, Ashok Elluswamy, has described a "neural world simulator" that generates realistic video of Optimus performing tasks in factory environments, providing a closed-loop evaluation tool that shares infrastructure with Tesla's autonomous driving programme.

Boston Dynamics has integrated NVIDIA Isaac Lab into its development pipeline for the electric Atlas, using it to train policies that can be deployed directly to the robot. The company's Atlas humanoid has demonstrated sim-to-real grasping capabilities using the dexterous grasping workflow available in Isaac Lab, which uses an automated curriculum that progressively increases task complexity while randomising environmental conditions such as gravity, friction, and object weight.

Fourier, developer of the GR-1 and GR-2 humanoids, initially used NVIDIA Isaac Gym for reinforcement learning and has migrated to Isaac Lab. The GR00T N1 foundation model — NVIDIA's open humanoid robot model — has demonstrated language-conditioned bimanual manipulation on Fourier's hardware, with policies developed in simulation and transferred to the physical robot.

Companies including Agility Robotics, Figure AI, Apptronik, and 1X Technologies are all users of simulation-based training, and many have adopted NVIDIA's platform or MuJoCo-based workflows as part of their development stack. The trend is clear: simulation is not a nice-to-have for humanoid developers — it is foundational infrastructure.

The Remaining Challenges

Despite remarkable progress, sim-to-real transfer for humanoid robots is far from a solved problem. Several fundamental challenges remain.

Contact and deformation. Simulating what happens when a robot hand grasps a soft object — a piece of fruit, a cloth, a cable — remains extremely difficult. Real-world contact involves deformation at microscopic scales, and the computational cost of modelling this accurately grows rapidly. For humanoid robots expected to handle household objects or work alongside humans, this is a critical limitation.

Long-horizon tasks. Most successful sim-to-real demonstrations involve relatively short, well-defined tasks: picking up an object, walking a few metres, reorienting a block. Real-world deployment demands long-horizon behaviours — sequences of many actions over extended periods, where errors compound and recovery from unexpected situations is essential. Training and evaluating these in simulation is orders of magnitude more challenging.

Generalisation across environments. A humanoid trained in a simulated kitchen may struggle in a real kitchen that looks different, has different appliances, or is arranged in an unfamiliar layout. Achieving zero-shot generalisation — the ability to perform in environments never seen during training — remains an open research problem, although foundation models and large-scale synthetic data are beginning to make inroads.

Evaluation at scale. As humanoid robots become more capable, the number of conditions under which they must perform grows exponentially. Testing a general-purpose humanoid across thousands of possible tasks, environments, and edge cases requires an evaluation infrastructure that is itself a significant engineering challenge. Simulation is the most promising tool for this — but only if the simulation is trusted to be representative of real-world performance.

The long tail of physics. Real-world environments contain an enormous variety of materials, surfaces, lighting conditions, and physical phenomena. No simulator captures all of them. The "long tail" of unusual or edge-case physical interactions — a wet floor, a deformed cardboard box, an unexpectedly heavy object — can cause failures that never appeared during simulated training. Closing this gap requires not just better simulators but better methods for identifying and addressing the specific discrepancies that matter most for a given task.

Where the Field Is Heading

Several converging trends suggest that sim-to-real transfer for humanoid robots will continue to improve rapidly over the coming years.

Foundation models and simulation are merging. The integration of vision-language-action models like GR00T N1.6 with simulation frameworks like Isaac Lab and Newton creates a pipeline where reasoning, perception, and physical control can all be developed, trained, and evaluated within a unified simulated environment. These models can learn from both simulated and real data, and their ability to generalise across tasks and embodiments reduces the need for task-specific sim-to-real engineering.

World foundation models are generating better data. Platforms like NVIDIA Cosmos can transform ground-truth 3D simulation scenes into photorealistic synthetic video, dramatically improving the visual realism of training data without requiring changes to the underlying physics engine. This addresses a key weakness of traditional simulation: it may model physics acceptably but render visuals that look nothing like the real world.

Physics engines are becoming faster and more accurate. The collaboration between NVIDIA, Google DeepMind, and Disney Research on the Newton engine — built on GPU-accelerated MuJoCo-Warp — aims to provide both the speed needed for massive parallel training and the accuracy needed for high-fidelity contact simulation. As these engines mature, the reality gap itself will narrow.

Co-training and continual learning are reducing dependence on pure simulation. Rather than treating sim-to-real as a one-time transfer, the field is moving towards pipelines that continuously refine policies using a blend of simulated and real-world data. This mirrors how the most capable AI systems in other domains learn: from vast quantities of synthetic data, anchored by smaller quantities of high-quality real-world experience.

Sim-to-real transfer is not a single technique but an entire discipline — one that sits at the intersection of physics simulation, machine learning, mechanical engineering, and computer vision. For humanoid robots, it is the bridge between ambition and deployment: the technology that determines whether a robot that performs brilliantly in a virtual world can do the same in ours. As simulation platforms grow more powerful and the techniques for crossing the gap grow more sophisticated, that bridge is becoming shorter — but it has not yet been fully crossed.